Type // to enjoy our AI assistance as you write on Google Docs.

Type // craft compelling emails and personalized replies.

Explore a more powerful Bing sidebar alternative for Chrome.

Find HIX.AI's comprehensive responses among typical search results.

Select any text online to translate, rewrite, summarize, etc.

Type // to compose concise yet powerful Twitter posts that trend.

Type // to create engaging captions for your Instagram posts.

Type // to draft interactive Facebook posts that engage your community.

Type // to provide valuable, upvoted answers on Quora.

Type // to craft Reddit posts that resonate with specific communities.

Summarize long YouTube videos with one click.

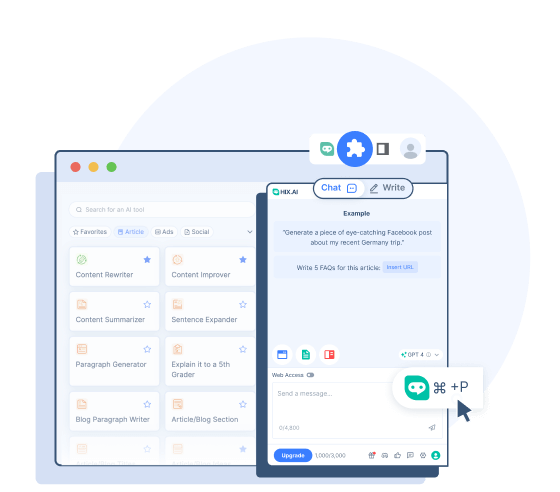

- HIX Chat (ChatGPT Alternative)

A leading AI chatbot that can respond with up-to-date information.

- HIX Chat (No Login)

Experience unrestricted access to HIX Chat. No login is needed.

- GPT-4o (No Login)

Try the advanced power of GPT-4o with less restriction and smoother connection.

- ChatGPT (No Login)

Use ChatGPT free without logging in!

- GPT-4 Chat Online

Use GPT-4 chatbot free online.

- Math AI Solver

Get step-by-step solutions to any math homework problem.

GPT-4o

- History

GPT-4o: The Next Evolution of OpenAI's Language Models

GPT-4o (the "o" means "omni") is a state-of-the-art multimodal large language model developed by OpenAI and released on May 13, 2024. It builds upon the success of the GPT family of models and introduces several advancements in comprehensively understanding and generating content across different modalities.

GPT-4o offers better natural language processing capabilities and a faster response time compared to previous models. It can natively understand and generate text, images, and audio, enabling more intuitive and interactive user experiences. This allows GPT-4o to have improved abilities to not only answer knowledge-based questions and create text, but also analyze and describe images and videos.

GPT-4o's Capabilities

The new enhancements brought by OpenAI to this model elevate its audio, vision and text capabilities.

Multimodal Input and Output

Each input and output of GPT4-o can be any combination of text, audio and images. Unlike OpenAI's previous models, all text, audio and images are processed without any conversion (GPT-4o can read images, hear audio and output them directly). This offers GPT-4o the ability to process them faster and have better understanding of these items.

Real-Time Natural Conversations

The enhanced voice recognition and response capabilities of GPT-4o's enable it to engage in verbal conversations (even in different languages) without noticeable delays. The model can observe tones and emotions of the speakers and deliver proper responses. It can also speak with a natural voice and with emotional nuances, enabling more sensitive communications.

Visual Content Analysis and Editing

GPT-4o can better understand and edit visual content. It can read the graphics, text or data on images and understand the meanings behind. You can upload images for analysis, gaining more precise insights and explanations. The model can also create or edit images as exactly as your prompt requests with high quality.

Memory and Contextual Awareness

GPT-4's upgraded context window ensures that it can maintain context over longer conversations. It supports up to 128,000 tokens, enabling detailed analysis and coherent conversations.

GPT-4o vs GPT-4 vs GPT-3.5

Want to know how GPT-4o is different from GPT-4 and GPT-3.5? Here are their key differences:

GPT-4o

- GPT-4o was initially released in May 2024.

- It is a more advanced multimodal model with faster speeds and lower latencies to respond to audio and video inputs.

- GPT-4o is trained on data up to Oct 2023.

- GPT-4o is performing better on benchmarks of reasoning, speech recognition, and visual capabilities.

- It has significant improvement on text processing in non-English languages.

GPT-4

- GPT-4 was initially released in March 2023.

- It is a multimodal model, meaning it can understand image and voice inputs along with text prompts.

- GPT-4 is trained on more up-to-date data, up to Dec 2023.

- GPT-4 performs better than GPT-3.5 in areas like coding, writing, reasoning, and avoiding disallowed content.

- GPT-4 is more reliable and creative, and has better scores on benchmarks than GPT-3.5.

GPT-3.5

- GPT-3.5 was released in November 2022. It powers the free version of ChatGPT.

- GPT-3.5 is limited to text input and output.

- It is trained on older data up to Sep 2021.

- GPT-3.5 can sometimes be less reliable and creative when generating responses.

How to Access GPT-4o

GPT-4o has been made accessible since its release. There are several ways you can access and experience its power:

Use GPT-4o on ChatGPT

OpenAI has allowed the ChatGPT users to use this new model right on the chatbot. Free users will have access with message restriction and can only interact with this model with text. For the paid ChatGPT Plus users, all these restrictions are lifted.

Use GPT-4o with OpenAI's API

OpenAI has also made GPT-4o available as a model option for API access. Developers are now allowed to integrate the GPT-4o power into their project or application.

Use GPT-4o on HIX.AI

If you need a more convenient way to access GPT-4o, you can try it on HIX.AI. It's free to try without having to login. If you're restricted from accessing GPT-4o through the official methods, this is another reliable way to use this innovative model.

Why Use GPT-4o on HIX.AI

Accessing GPT-4o on HIX.AI comes with several benefits:

No Login Required

Experience the convenience of instant access with HIX.AI. Just navigate to our GPT-4o page and you can start interacting with GPT-4o right away.

Smoother Connection

When accessing GPT-4o on HIX.AI, you're less likely to experience server issues. We strive to minimize latency and maintain high performance for your connection to this model.

Unrestricted Access

We don't impose any restrictions on our GPT-4o chatbot access. Wherever and whenever you're, you can experience this powerful AI innovation freely.

Discover More Resources About GPT-4 and ChatGPT

Learn more about the most advanced language models with our informative articles here:

How to Fix ChatGPT's "Too Many Requests in 1 Hour. Try Again Later."

Have you received the "Too many requests in 1 hour. Try again later." error on ChatGPT? Follow this guide and take care of it quickly.

How to Fix ChatGPT Internal Server Error

Wondering how to fix an internal server error on ChatGPT? We’ve got you covered. Try these 8 solutions to resolve ChatGPT's internal server error and get back to a seamless AI-powered chat.

What Does GPT Stand for in ChatGPT?

What does GPT stand for in ChatGPT? This article will give you the answer and uncover other mysteries surrounding the GPT acronym.

Is ChatGPT Down Right Now?

If you’re having trouble using ChatGPT, you’re probably wondering: “Is ChatGPT down right now?” We’ll tell you if there’s a ChatGPT outage and explain its server status right here.

GPT-4 Login: How to Log In to ChatGPT for Free

ChatGPT-4 is the latest version of the popular chatbot, but using it comes at a price. Read on to find out how to log in to and use ChatGPT-4 for free.

Is Chatsonic Better Than ChatGPT?

Chatsonic chat is a popular alternative to ChatGPT, but is it better? Here’s our Chatsonic vs. ChatGPT comparison to give you the ultimate answer.

FAQs

How does GPT-4o outperform the previous models?

The major advantages of GPT-4o over the previous models are its enhanced multimodal capabilities, which enable it to perform real-time conversation and advanced vision/audio handling with lower latencies.

Can GPT-4o help me translate languages?

Yes. GPT-4o comes with better multilingual capabilities and can act as a good translator for 50+ languages.

What is the knowledge cutoff date for GPT-4o?

The knowledge cutoff date, or the latest information GPT-4o was trained on, is October 2023.

Does GPT-4o have any limitations?

Despite its capabilities, GPT-4o still has limitations inherited from large language models like potential biases, hallucinations, and lack of robust long-term memory. Its knowledge is also fundamentally limited to its training data.